PrivacyNightmare : Différence entre versions

(→Surveillance state) |

|||

| Ligne 304 : | Ligne 304 : | ||

===Wikipedia Fr - [https://fr.wikipedia.org/wiki/Piratage_du_PlayStation_Network Piratage du PlayStation Network]=== | ===Wikipedia Fr - [https://fr.wikipedia.org/wiki/Piratage_du_PlayStation_Network Piratage du PlayStation Network]=== | ||

| − | =Surveillance state= | + | =Surveillance state and its willing helpers= |

=== Harvard Law Review: [http://www.harvardlawreview.org/symposium/papers2012/richards.pdf The Dangers of Surveillance] (2012) === | === Harvard Law Review: [http://www.harvardlawreview.org/symposium/papers2012/richards.pdf The Dangers of Surveillance] (2012) === | ||

"From the Fourth Amendment to George Orwell’s Nineteen Eighty-Four, and | "From the Fourth Amendment to George Orwell’s Nineteen Eighty-Four, and | ||

Version du 25 septembre 2013 à 23:19

This page aims to gather articles, studies and reports on the risks and excesses caused by a weak data protection.

Feel free to contribute by adding links to this pad or by directly editing this page.

Other documents are available in French

Sommaire

- 1 Studies on re-identification

- 1.1 ArsTechnica - 'Anonymized' data really isn’t—and here’s why not (08 September 2009)

- 1.2 Nature - Unique in the Crowd: The privacy bounds of human mobility (25 March 2013)

- 1.3 University of Cambridge - Digital records could expose intimate details and personality traits of millions (11 March 2013)

- 1.4 Wired - Liking curly fries on Facebook reveals your high IQ (12 March 2013)

- 2 Public positions

- 3 The data industry

- 3.1 MemeBurn - How much are you worth to Facebook? (4 April 2013)

- 3.2 RadioFreeEurope/RadioLiberty - Interview: 'It's Pretty Much Impossible' To Protect Online Privacy (8 April 2013)

- 3.3 SydneyMorningHerald - Facebook 'erodes any idea of privacy' (8 April 2013)

- 3.4 Computerworld - Judge awards class action status in privacy lawsuit vs. comScore (4 April 2013)

- 3.5 GigaOm - Why the collision of big data and privacy will require a new realpolitik (25 March 2013)

- 3.6 WorldCrunch - European Academics Launch Petition To Protect Personal Data From "Huge Lobby" (13 March 2013)

- 4 Data breach

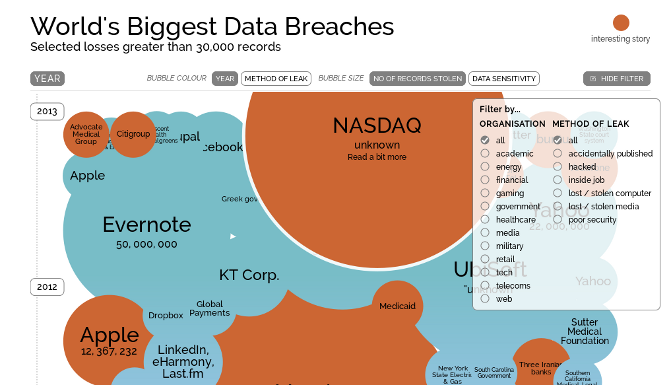

- 4.1 Information is beautiful - World's Biggest Data Breaches

- 4.2 The Register - 35m Google Profiles dumped into private database (25 May 2011)

- 4.3 Bits - Yahoo Breach Extends Beyond Yahoo to Gmail, Hotmail, AOL Users (12 July 2012)

- 4.4 The Huffington Post - Yahoo Confirms 450,000 Accounts Breached, Experts Warn Of Collateral Damage (12 July 2012)

- 4.5 Network World - eHarmony data breach lessons: Cracking hashed passwords can be too easy (6 July 2012)

- 4.6 The New York Times - Lax Security at LinkedIn Is Laid Bare (10 June 2012)

- 4.7 Wired - Cloud Computing Snafu Shares Private Data Between Users (4 February 2013)

- 4.8 Wikipedia Article - Data breach: Major incidents

- 4.9 Wikipedia Fr - Piratage du PlayStation Network

- 5 Surveillance state and its willing helpers

- 6 Employment

- 7 Bank and credit

- 8 General studies

- 8.1 The Boston Consulting Group - The Value of Our Digital Identity (20 November 2012)

- 8.2 The Cost of Reading Privacy Policies. I/S: A Journal of Law and Policy for the Information Society 2008 Privacy Year in Review issue. (with A. McDonald) http://lorrie.cranor.org/#publications Download: http://lorrie.cranor.org/pubs/readingPolicyCost-authorDraft.pdf

- 9 Surveillance state

- 10 Other

Studies on re-identification

ArsTechnica - 'Anonymized' data really isn’t—and here’s why not (08 September 2009)

87 percent of all Americans could be uniquely identified using only three bits of information: ZIP code, birthdate, and sex. [...]

almost all information can be "personal" when combined with enough other relevant bits of data.[...]

That's the claim advanced by Ohm in his lengthy new paper on "the surprising failure of anonymization." As increasing amounts of information on all of us are collected and disseminated online, scrubbing data just isn't enough to keep our individual "databases of ruin" out of the hands of the police, political enemies, nosy neighbors, friends, and spies. [...]

Examples of the anonymization failures aren't hard to find.

When AOL researchers released a massive dataset of search queries, they first "anonymized" the data by scrubbing user IDs and IP addresses. [...] Despite scrubbing the obviously identifiable information from the data, computer scientists were able to identify individual users [...]

The problem was that user IDs were scrubbed but were replaced with a number that uniquely identified each user. This seemed like a good idea at the time, since it allowed researchers using the data to see the complete list of a person's search queries, but it also created problems; those complete lists of search queries were so thorough that individuals could be tracked down simply based on what they had searched for. As Ohm notes, this illustrates a central reality of data collection: "data can either be useful or perfectly anonymous but never both." [...]

Such results are obviously problematic in a world where Google retains data for years, "anonymizing" it after a certain amount of time but showing reticence to fully delete it. "Reidentification science disrupts the privacy policy landscape by undermining the faith that we have placed in anonymization," Ohm writes. "This is no small faith, for technologists rely on it to justify sharing data indiscriminately and storing data perpetually, all while promising their users (and the world) that they are protecting privacy. Advances in reidentification expose these promises as too often illusory." [...]

"For almost every person on earth, there is at least one fact about them stored in a computer database that an adversary could use to blackmail, discriminate against, harass, or steal the identity of him or her. I mean more than mere embarrassment or inconvenience; I mean legally cognizable harm. Perhaps it is a fact about past conduct, health, or family shame. For almost every one of us, then, we can assume a hypothetical 'database of ruin,' the one containing this fact but until now splintered across dozens of databases on computers around the world, and thus disconnected from our identity. Reidentification has formed the database of ruin and given access to it to our worst enemies." [...]

Nature - Unique in the Crowd: The privacy bounds of human mobility (25 March 2013)

Apple recently updated its privacy policy to allow sharing the spatio-temporal location of their users with “partners and licensees” [('Apple and our partners and licensees may collect, use, and share precise location data, including the real-time geographic location of your Apple computer or device. This location data is collected anonymously in a form that does not personally identify you') ...] [I]t is estimated that a third of the 25B copies of applications available on Apple's App StoreSM access a user's geographic location and that the geo-location of ~50% of all iOS and Android traffic is available to ad networks. All these are fuelling the ubiquity of simply anonymized mobility datasets and are giving room to privacy concerns.

A simply anonymized dataset does not contain name, home address, phone number or other obvious identifier. Yet, if individual's patterns are unique enough, outside information can be used to link the data back to an individual. [...]

We study fifteen months of human mobility data for one and a half million individuals and find that human mobility traces are highly unique. In fact, in a dataset where the location of an individual is specified hourly, and with a spatial resolution equal to that given by the carrier's antennas, four spatio-temporal points are enough to uniquely identify 95% of the individuals. [...]

University of Cambridge - Digital records could expose intimate details and personality traits of millions (11 March 2013)

Research shows that intimate personal attributes can be predicted with high levels of accuracy from ‘traces’ left by seemingly innocuous digital behaviour, in this case Facebook Likes.

In the study, researchers describe Facebook Likes as a “generic class” of digital record - similar to web search queries and browsing histories - and suggest that such techniques could be used to extract sensitive information for almost anyone regularly online.

Researchers at Cambridge’s Psychometrics Centre, in collaboration with Microsoft Research Cambridge, analysed a dataset of over 58,000 US Facebook users, who volunteered their Likes, demographic profiles and psychometric testing results through the myPersonality application.

Users opted in to provide data and gave consent to have profile information recorded for analysis. Facebook Likes were fed into algorithms and corroborated with information from profiles and personality tests.

Researchers created statistical models able to predict personal details using Facebook Likes alone. Models proved 88% accurate for determining male sexuality, 95% accurate distinguishing African-American from Caucasian American and 85% accurate differentiating Republican from Democrat. Christians and Muslims were correctly classified in 82% of cases, and good prediction accuracy was achieved for relationship status and substance abuse – between 65 and 73%. [...]

The researchers also tested for personality traits including intelligence, emotional stability, openness and extraversion. While such latent traits are far more difficult to gauge, the accuracy of the analysis was striking. Study of the openness trait – the spectrum of those who dislike change to those who welcome it – revealed that observation of Likes alone is roughly as informative as using an individual’s actual personality test score.

Some Likes had a strong but seemingly incongruous or random link with a personal attribute, such as Curly Fries with high IQ, or That Spider is More Scared Than U Are with non-smokers.

When taken as a whole, researchers believe that the varying estimations of personal attributes and personality traits gleaned from Facebook Like analysis alone can form surprisingly accurate personal portraits of potentially millions of users worldwide. [...]

“Similar predictions could be made from all manner of digital data, with this kind of secondary ‘inference’ made with remarkable accuracy - statistically predicting sensitive information people might not want revealed. Given the variety of digital traces people leave behind, it’s becoming increasingly difficult for individuals to control." [...]

Wired - Liking curly fries on Facebook reveals your high IQ (12 March 2013)

What you Like on Facebook could reveal your race, age, IQ, sexuality and other personal data, even if you've set that information to "private". [...] The research shows that although you might choose not to share particular information about yourself it could still be inferred from traces left on social media, such as the TV shows you watch or the music you listen to or the spiders that are afraid of you. [...]

Public positions

More than 100 Leading European Academics are taking a position

The core argument against the proposed data protection regulation is that the regulation will negatively impact innovation and competition. Critics argue that the suggested data protection rules are too strong and that they curb innovation to a degree that disadvantages European players in today’s global marketplace. We do not agree with this opinion. On the contrary, we have seen that a regulatory context can promote innovation. [...] For many important business processes, it is not data protection regulation that prevents companies from adopting cloud computing services; rather it is uncertainty over data protection itself. [...]

Since 1995, usage of personal data in the European Union has relied on the principle of informed consent. This principle is the lynchpin of informational self-determination. However, few would dispute that it has not been put into practice well enough so far. On one side, users criticise that privacy statements and general terms and conditions are difficult to read and leave users without choices: If one wants to use a service, one must more or less blindly confirm. On the other side, companies see the legal design of their data protection terms as a tightrope walk. Formulating data protection terms is viewed as a costly exercise. At the same time, customers are overstrained or put off by the small print.

As a result, many industry representatives suggest an inversion of the informed consent principle and an embrace of an opt-out principle, as is experienced today in the USA. In the USA, most personal data handling practices are initially allowed to take place as long as the user does not opt out.

The draft regulation, in contrast, strengthens informational self-determination. Explicit informed consent is preserved. Moreover, where there is a significant imbalance between the position of the data subject and the controller, consent shall not provide a legal basis for the processing. The coupling of service usage with personal data usage is even prohibited if that usage extends beyond the immediate context of customer service interaction.

We support the draft of the data protection regulation because we believe that explicit informed consent is indispensable. [A]n inversion of the informed consent principle into an opt-out principle considerably weakens the position of citizens. Such an inversion gives less control to individuals and therefore reduces their trust in the Internet. [...]

Currently, companies can process personal data without client consent if they can argue that they have a legitimate interest in the use of that data. So far, unfortunately, the term “legitimate interest” leaves plenty of room for interpretation: When is an interest legitimate and when is it not?

To prevent abuse of this rule, which is reasonable in principle, the new data protection regulation defines and balances the legitimate interests of companies and customers. The regulation requires that companies not only claim a legitimate interest, but also justify it. Moreover, the draft report of the European Parliament’s rapporteur now outlines legitimate interests of citizens. It determines where the interests of citizens outweigh company interests and vice-versa. In the proposed regulatory amendments provided by the rapporteur, citizens have a legitimate interest that profiles are not created about them without their knowledge and that their data is not shared with a myriad of third parties that they do not know about. We find this balancing of interests a very fair offer that aligns current industry best practices with the interests of citizens. [...]

Online users are often identified implicitly; that is, users are identified by the network addresses of their devices (IP addresses) or by cookies that are set in web browsers. Implicit identifiers can be used to create profiles. Some of these implicit identifiers change constantly, which is why at first sight they seem unproblematic from a data protection perspective. To some, it may appear as if individuals could not be re-identified on the basis of such dynamic identifiers. However, many experiments have shown that such re-identification can be done.

Despite the undisputable ability to build profiles and re-identify data, some industry representatives maintain that data linked to implicit identifiers should not be covered by the regulation. They argue that Internet companies that collect a lot of user data are only interested in aggregated and statistical data and would therefore not engage in any re-identification practices.

For technical, economical and legal reasons we cannot follow this opinion. Technically, it is easy to relate data collected over a long period of time to a unique individual. Economically, it may be true that the identification of individuals is not currently an industry priority. However, the potential for this re-identification is appealing and can therefore not be excluded from happening. Legally, we must protect data that may be re-identifiable at some point, as such precautionary measures could prove to be the only effective remedy.

Some EU parliamentarians suggest that anonymized, pseudonomized and encrypted data should generally not be covered by the data protection regulation. They argue that such data is not “personal” any more. This misconception is dangerous. Indisputably, anonymization, pseudonomization, and encryption are useful instruments for technical data protection: Encryption helps to keep data confidential. Pseudonyms restrict knowledge about individuals and their sensitive data (e.g., the relation between the medical data of a patient) to those that really need to know it. However, in many cases even this kind of protected data can be used to re-identify individuals. We therefore believe that this type of data also needs to be covered by the data protection regulation [...]

The data industry

MemeBurn - How much are you worth to Facebook? (4 April 2013)

The argument that hundreds of millions of people give away their personal data on social networks with absolutely no interest in the commercial value of that information does not make sense. It is simply the case that they don’t have the slightest idea. [...] According to Spiekerman: “Even if privacy is an inalienable human right it would be good if people were enabled to manage their personal data as private property.” It’s not only about “monetizing”. The earth is, happily, not that flat. But materializing privacy might help us to overcome the huge issues we have when it comes to the privacy of internet users, and finally social networks and marketing will profit from more knowledge and more trust in the use of personal data.

RadioFreeEurope/RadioLiberty - Interview: 'It's Pretty Much Impossible' To Protect Online Privacy (8 April 2013)

From online companies tracking users' digital footprints to the trend for more and more data to be stored on cloud servers, Internet privacy seems like a thing of the past -- if it ever existed at all. RFE/RL correspondent Deana Kjuka recently spoke about these issues with online security analyst Bruce Schneier, author of the book "Liars and Outliers: Enabling the Trust Society Needs to Survive." [...] "If [you use] Gmail, [then] Google has all of your e-mail. If your files are in Dropbox, if you are using Google Docs, [or] if your calendar is iCal, then Apple has your calendar. So it just makes it harder for us to protect our privacy because our data isn't in our hands anymore." "I don't know about the future, but my guess is that, yes. The big risks are not going to be the illegal risks. They are going to be the legal risks. It's going to be governments. It's going to be corporations. It's going to be those in power using the Internet to stay in power."

SydneyMorningHerald - Facebook 'erodes any idea of privacy' (8 April 2013)

Facebook Home for Android phones has been dubbed by technologists as the death of privacy and the start of a new wave of invasive tracking and advertising. [...] Prominent tech blogger Om Malik wrote that Home “erodes any idea of privacy”. “If you install this, then it is very likely that Facebook is going to be able to track your every move, and every little action,” said Mailk. “This opens the possibility up for further gross erosions of privacy on unsuspecting users, all in the name of profits, under the guise of social connectivity,” he said. [...]

Computerworld - Judge awards class action status in privacy lawsuit vs. comScore (4 April 2013)

A federal court in Chicago this week granted class action status to a lawsuit accusing comScore, one of the Internet's largest user tracking firms, of secretly collecting and selling Social Security numbers, credit card numbers, passwords and other personal data collected from consumer systems. [...] To collect data, comScore's software modifies computer firewall settings, redirects Internet traffic, and can be upgraded and controlled remotely, the complaint alleged. The suit challenged comScore's assertions that it filtered out personal information from data sold to third parties, and of intercepting data it had no business to access. [...]

GigaOm - Why the collision of big data and privacy will require a new realpolitik (25 March 2013)

People’s movements are highly predictable, researchers say, making it easy to identify most individuals from supposedly anonymized location datasets. As these datasets have valid uses, this is yet another reason why we need better regulation. [...] One of the explicit purposes of Unique in the Crowd was to raise awareness. As the authors put it: “these findings represent fundamental constraints to an individual’s privacy and have important implications for the design of frameworks and institutions dedicated to protect the privacy of individuals.” [...]

WorldCrunch - European Academics Launch Petition To Protect Personal Data From "Huge Lobby" (13 March 2013)

This week, more than 90 leading academics across Europe launched a petition to support the European Commission’s draft data protection regulation, reports the EU Observer. The online petition, entitled Data Protection in Europe, says “huge lobby groups are trying to massively influence the regulatory bodies.” The goal of the site is to make sure the European Commission’s law is in line with the latest technologies and that the protection of personal data is guaranteed. [...]

Data breach

Information is beautiful - World's Biggest Data Breaches

The Register - 35m Google Profiles dumped into private database (25 May 2011)

Proving that information posted online is indelible and trivial to mine, an academic researcher has dumped names, email addresses and biographical information made available in 35 million Google Profiles into a massive database that took just one month to assemble.

University of Amsterdam Ph.D. student Matthijs R. Koot said he compiled the database as an experiment to see how easy it would be for private detectives, spear phishers and others to mine the vast amount of personal information stored in Google Profiles. The verdict: It wasn't hard at all. Unlike Facebook policies that strictly forbid the practice, the permissions file for the Google Profiles URL makes no prohibitions against indexing the list.

What's more, Google engineers didn't impose any technical limitations in accessing the data, which is made available in an extensible markup language file called profiles-sitemap.xml. [...]

The database compiled by Koot contains names, educational backgrounds, work histories, Twitter conversations, links to Picasa photo albums, and other details made available in 35 million Google Profiles. It comprises the usernames of 11 million of the profile holders, making their Gmail addresses easy to deduce. [...]

Bits - Yahoo Breach Extends Beyond Yahoo to Gmail, Hotmail, AOL Users (12 July 2012)

Yahoo confirmed Thursday that about 400,000 user names and passwords to Yahoo and other companies were stolen on Wednesday.

A group of hackers, known as the D33D Company, posted online the user names and passwords for what appeared to be 453,492 accounts belonging to Yahoo, and also Gmail, AOL, Hotmail, Comcast, MSN, SBC Global, Verizon, BellSouth and Live.com users. [...]

The hackers wrote a brief footnote to the data dump, which has since been taken offline: “We hope that the parties responsible for managing the security of this subdomain will take this as a wake-up call, and not as a threat.”

The Huffington Post - Yahoo Confirms 450,000 Accounts Breached, Experts Warn Of Collateral Damage (12 July 2012)

Security researchers warned Thursday that thousands of people could be vulnerable to hackers after Yahoo confirmed that about 450,000 usernames and passwords were stolen from one of the company's databases [...]

Yahoo Voices contributors signed up using a variety of accounts: about 140,000 Yahoo addresses, more than 100,000 Gmail addresses, more than 55,000 Hotmail addresses and more than 25,000 AOL addresses. [...]

A hacker group called D33D claimed responsibility for the disclosure of usernames and passwords belonging to Yahoo Voices' users.

"We hope that the parties responsible for managing the security of this subdomain will take this as a wake-up call, and not as a threat," the group said in a statement. [...]

Alex Horan, a senior product manager at CORE Security, criticized Yahoo for apparently storing usernames and passwords without encrypting them.

"The bigger problem is these passwords were sitting there in the clear," Horan said. He added that encrypting passwords was "Security 101."

"That’s mind-blowing that a company wouldn't do that," he said. [...]

Network World - eHarmony data breach lessons: Cracking hashed passwords can be too easy (6 July 2012)

Last month the dating site eHarmony suffered a data breach in which more than 1.5 million eHarmony password hashes were stolen and later dumped online by the hacker gang called Doomsday Preppers. The crypto-based "hashing" process is supposed to conceal stored passwords, but Trustwave's SpiderLabs division says eHarmony could have done this process a lot better because it only took 72 hours to crack about 80% of 1.5 million eHarmony hashed passwords that were dumped.

Cracking the dumped eHarmony passwords wasn't too hard, says Mike Kelly, security analyst at SpiderLabs, which used tools such as oclHashcat and John the Ripper. In fact, he says it was one of the "easiest" challenges he ever faced. There are many reasons why this is so, starting with the fact the cracked passwords may have been "hashed," but they weren't "salted," which he says "would drastically increase the time it would take to crack them." [...]

The New York Times - Lax Security at LinkedIn Is Laid Bare (10 June 2012)

Last week, hackers breached the site and stole more than six million of its customers’ passwords, which had been only lightly encrypted. [...]

What has surprised customers and security experts alike is that a company that collects and profits from vast amounts of data had taken a bare-bones approach to protecting it. The breach highlights a disturbing truth about LinkedIn’s computer security: there isn’t much. Companies with customer data continue to gamble on their own computer security, even as the break-ins increase.

“If they had consulted with anyone that knows anything about password security, this would not have happened,” said Paul Kocher, president of Cryptography Research, a San Francisco computer security firm. [...]

LinkedIn does not have a chief security officer whose sole job it is to monitor for breaches. The company says David Henke, its senior vice president for operations, oversees security in addition to other roles, but Mr. Henke declined to speak for this article. [...]

A low-cost competitor to giants such as RackSpace and Amazon, DigitalOcean sells cheap computing power to web developers who want to get their sites up and running for as little as $5 per month. But it turns out that some of those customers — those who were buying the $40 per month or $80 per month plans, for example — aren’t necessarily getting their data wiped when they cancel their service. And some of that data is viewable to other customers. Kenneth White stumbled across several gigabytes of someone else’s data when he was noodling around on DigitalOcean’s service last week. White, who is chief of biomedical informatics with Social and Scientific Systems, found e-mail addresses, web links, website code and even strings that look like usernames and passwords — things like 1234qwe and 1234567passwd. [...]

Wikipedia Article - Data breach: Major incidents

Wikipedia Fr - Piratage du PlayStation Network

Surveillance state and its willing helpers

Harvard Law Review: The Dangers of Surveillance (2012)

"From the Fourth Amendment to George Orwell’s Nineteen Eighty-Four, and from the Electronic Communications Privacy Act to films like Minority Report and The Lives of Others, our law and literature are full of warnings about state scrutiny of our lives. These warnings are commonplace, but they are rarely very specific. Other than the vague threat of an Orwellian dystopia, as a society we don’t really know why surveillance is bad, and why we should be wary of it. To the extent the answer has something to do with “privacy,” we lack an understanding of what “privacy” means in this context, and why it matters. We’ve been able to live with this state of affairs largely because the threat of constant surveillance has been relegated to the realms of science fiction and failed totalitarian states."

Employment

OnDevice - Facebook costing 16-34s jobs in tough economic climate - See more at: http://ondeviceresearch.com/blog/facebook-costing-16-34s-jobs-in-tough-economic-climate#sthash.MLn5EZhF.Fp7xrN3M.dpuf

The index which covers 6000 16-34 year olds across six countries revealed some surprising results [: ] If getting a job was not hard enough in this tough economic climate, one in ten young people have been rejected for a job because of their social media profile.

Bank and credit

CNN - Facebook friends could change your credit score (27 August 2013)

“[...] some financial lending companies have found that social connections can be a good indicator of a person's creditworthiness.

One such company, Lenddo, determines if you're friends on Facebook (FB) with someone who was late paying back a loan to Lenddo. If so, that's bad news for you. It's even worse news if the delinquent friend is someone you frequently interact with.

"It turns out humans are really good at knowing who is trustworthy and reliable in their community," said Jeff Stewart, a co-founder and CEO of Lenddo. "What's new is that we're now able to measure through massive computing power."

A German company called Kreditech says that it uses up to 8,000 data points when assessing an application for a loan.

In addition to data from Facebook, eBay or Amazon accounts. Kreditech also gathers information from the manner in which a customer fills out the online application. For example, your chances of getting a loan improve if you spend time reading information about the loan on Kreditech's website. If you fill out the application typing in all-caps (or with no caps), you're knocked down a couple pegs in Kreditech's eyes.

Kreditech can determines your location and considers creditworthiness based upon whether your computer is located where you said you live or work.

The individual data points may not have meaning themselves, but can paint an good picture of the applicant when brought together, said Sebastian Diemer, a co-founder of Kreditech.

Another company, Kabbage, an online service that offers cash advances to small businesses, considers an owner's FICO score -- but only as one piece of a larger pie.

"We can get much better, faster data," said Marc Gorlin, Kabbage's chairman and co-founder.

Borrowers grant Kabbage access to their PayPal, eBay and other online payment accounts, disclosing real-time sales and delivery information. The company says it can determine a business' creditworthiness and put money into its account in just seven minutes.

Once a small business is getting credit from Kabbage, it also has the option to link up its Facebook and Twitter accounts to the site, which could provide a bump in its "Kabbage score." The small businesses that do are 20% less likely to be delinquent on their loans, Gorlin said.”

General studies

The Boston Consulting Group - The Value of Our Digital Identity (20 November 2012)

The Value of Our Digital Identity, a new report by The Boston Consulting Group in the Liberty Global Policy Series, takes a unique approach to understanding this new phenomenon. It quantifies, for the first time, the current and potential economic value of digital identity. It also explores—through research involving more than 3,000 individuals—the value that consumers place on their personal information and how they make decisions about whether or not to share it. Building on these findings, the report presents a new paradigm for unlocking the full value of digital identity in a sustainable, consumer-centered way. [...]

The report shows that the value created through digital identity can indeed be massive: €1 trillion in Europe by 2020 [...]

Individuals with a higher than average awareness of how their data are used require 26 percent more benefit in return for sharing their data. Meanwhile, consumers who are able to manage their privacy are up to 52 percent more willing to share information than those who aren’t. [...]

Given proper privacy controls and sufficient benefits, the survey found, most consumers are willing to share their personal data. To ensure that the flow of personal information continues, organizations therefore need to make the benefits clear to consumers. They also need to embrace responsibility, transparency, and user control. [...]

The Cost of Reading Privacy Policies. I/S: A Journal of Law and Policy for the Information Society 2008 Privacy Year in Review issue. (with A. McDonald) http://lorrie.cranor.org/#publications Download: http://lorrie.cranor.org/pubs/readingPolicyCost-authorDraft.pdf

It would take each American 244 hours per year to read privacy policies… cf. page 18. About 40 minutes every day.

Surveillance state

Other

EuropaQuotidiano - Facebook, i Big Data e la fine della privacy

Euobserver - Academics line up to defend EU data protection law